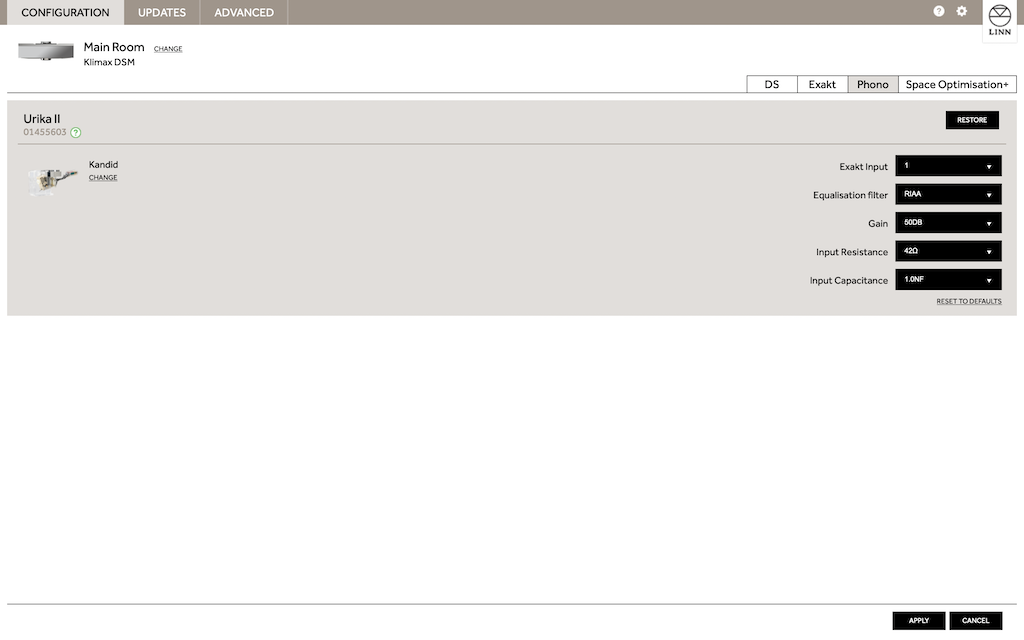

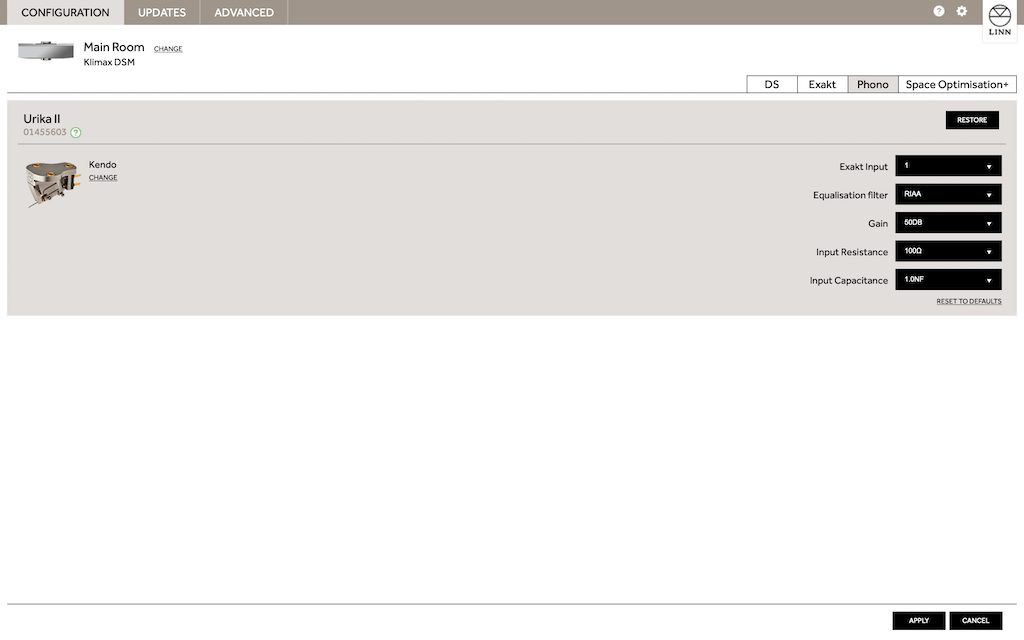

There are many ways to skin the RIAA cat even in the analog domain - whether to buffer between each time constant or combine them and whether to apply some of the filtering in a feedback network. Throwing in digital filtering gives more choices and splitting some time constants between analog domain and some in digital is possible and it seems that is Linn's choice.

I do want to follow-up on your comment that you cannot apply all the RIAA filtering in digital - it can be done safely. The link below is to an AES paper by Rob Robinson of Channel D, who makes a range of phono pre-amps. He has been a proponent of digital RIAA for many years and has an RIAA bypass switch in his phono amps to allow this. And he happens to write software to do the digital RIAA too. The paper is a little involved so here is my TLDR summary -

The obvious concern with sending an un-equalized signal to an ADC is that the RIAA-boosted treble will eat up the dynamic range and the low frequencies will be captured with low resolution. As a recap, the RIAA pre-emphasis applied to the cutting head cuts the lowest frequencies by 20dB (10x) compared to 1kHz and the highest frequencies are boosted by 20dB compared to 1kHz. But these concerns turn out not to be significant problems.

Though the lowest bass frequency signals might be reduced by a factor of 100 at the ADC input (if treble sets the voltage peak) they are still captured with full resolution. This is because bass frequencies are effectively over-sampled by a large amount, which makes up any loss in resolution due to the lower amplitude. If the ADC is sampling at 96kHz that is 2x oversampling for 20kHz but for a 20Hz signal that is 2000x over-sampling.

The highest frequencies turn out not to be as much of a problem as first expected because the energy in music rolls off above ~1kHz. So, even though RIAA boosts the treble, when combined with the natural treble roll-off the maximum levels of high frequencies are only about 6dB (2x) higher than 1kHz and not the expected 20dB. In the paper Mr. Robinson presents a study of many records where he has recorded peak amplitudes and their frequency distributions to reach that conclusion. So, typically the treble will use up a bit more ADC range than mid-band but only by about 1 bit, which is not a lot.

Finally, Mr. Robinson postulates that having the treble set the largest signal seen by the ADC is a better solution than applying RIAA de-emphasis before the ADC. In the former, the higher frequencies are captured with the fullest resolution and, we know that the lower frequencies are taken care of by progressively more over-sampling. If RIAA de-emphasis is applied first the largest peaks will come from mid-band so they will use up most of the ADC range and the treble frequencies will not be captured with as much resolution.

http://www.channld.com/aes123.pdf

I do want to follow-up on your comment that you cannot apply all the RIAA filtering in digital - it can be done safely. The link below is to an AES paper by Rob Robinson of Channel D, who makes a range of phono pre-amps. He has been a proponent of digital RIAA for many years and has an RIAA bypass switch in his phono amps to allow this. And he happens to write software to do the digital RIAA too. The paper is a little involved so here is my TLDR summary -

The obvious concern with sending an un-equalized signal to an ADC is that the RIAA-boosted treble will eat up the dynamic range and the low frequencies will be captured with low resolution. As a recap, the RIAA pre-emphasis applied to the cutting head cuts the lowest frequencies by 20dB (10x) compared to 1kHz and the highest frequencies are boosted by 20dB compared to 1kHz. But these concerns turn out not to be significant problems.

Though the lowest bass frequency signals might be reduced by a factor of 100 at the ADC input (if treble sets the voltage peak) they are still captured with full resolution. This is because bass frequencies are effectively over-sampled by a large amount, which makes up any loss in resolution due to the lower amplitude. If the ADC is sampling at 96kHz that is 2x oversampling for 20kHz but for a 20Hz signal that is 2000x over-sampling.

The highest frequencies turn out not to be as much of a problem as first expected because the energy in music rolls off above ~1kHz. So, even though RIAA boosts the treble, when combined with the natural treble roll-off the maximum levels of high frequencies are only about 6dB (2x) higher than 1kHz and not the expected 20dB. In the paper Mr. Robinson presents a study of many records where he has recorded peak amplitudes and their frequency distributions to reach that conclusion. So, typically the treble will use up a bit more ADC range than mid-band but only by about 1 bit, which is not a lot.

Finally, Mr. Robinson postulates that having the treble set the largest signal seen by the ADC is a better solution than applying RIAA de-emphasis before the ADC. In the former, the higher frequencies are captured with the fullest resolution and, we know that the lower frequencies are taken care of by progressively more over-sampling. If RIAA de-emphasis is applied first the largest peaks will come from mid-band so they will use up most of the ADC range and the treble frequencies will not be captured with as much resolution.

http://www.channld.com/aes123.pdf

Last edited: